AI implementation

7 Out-of-the-Box Applications of AI in Manufacturing

16 min read

—

Here's the list of the most prominent AI applications in the manufacturing industry. From defect detection to predictive maintenance and automated quality control—learn how AI is shaping the future of manufacturing.

Alberto Rizzoli

Co-founder & CEO

Here's a couple of interesting facts—

Reports have shown that the global AI manufacturing market is expected to reach $9,890,000,000 USD by 2027.

Research suggests that European manufacturers are already embracing the AI surge, with 69% of German manufacturers saying they are ready to implement some form of AI in their operations soon.

The European Commission estimated that as much as 50% of production in some industries can be dispensed with altogether because of defects.

Defect detection, predictive maintenance, liquid level analysis, asset inspection are all being shaped by AI solutions based on computer vision and machine learning.

This ushers in a new epoch of manufacturing—Industry 4.0—which removes the need for time-consuming and tedious checks and replaces them with computer vision systems ensuring higher levels of quality control on the production assembly line.

This is key because AI can spot defects that are otherwise easy to miss with the naked eye.

Here’s what we’ll cover:

Defect inspection

Quality assurance

Product assembly

PPE detection

Reading text and barcodes

Inventory management

Predictive maintenance

We’ll also be highlighting a number of current AI use cases in manufacturing, and describing how companies use training data platforms (such as V7) to train and deploy AI models.

Video annotation

AI video annotation

Get started today

Defect Inspection

Quality control was Henry Ford’s dream over a hundred years ago. But because the traditional assembly line has always relied on human beings to do their bit, it’s always been at the mercy of human error.

The naked eye can only see so much.

Fortunately, AI can see so much more.

Computer vision helps manufacturers with detection inspection via automated optical inspection (AOI). Using multi-cameras, it more easily identifies missing pieces, dents, cracks, scratches and overall damage, with the images spanning millions of data points, depending on the capability of the camera.

Companies are already adopting AI visual inspection. For instance, FIH Mobile are using it in smartphone manufacturing to highlight defects.

Using computer vision to spot defects has numerous benefits. It allows for the early detection of defects, and it also lets manufacturers gather multiple statistics that will help them improve their assembly lines going forward.

Ultimately, computer vision will reduce the margin of error and waste, while saving time and money.

Learn more about training defect inspection models with V7.

Various defect inspections that AI can carry out include using techniques such as template matching, pattern matching, and statistical pattern matching. Inspections are fast and accurate, and the AI also has the ability to learn about various defects so that, over time, it can get even better at its job.

Moreover, because computer vision systems are trained on thousands of datasets, they can override AOI shortcomings, including image quality issues and complicated surface textures to arrive at a precise assessment.

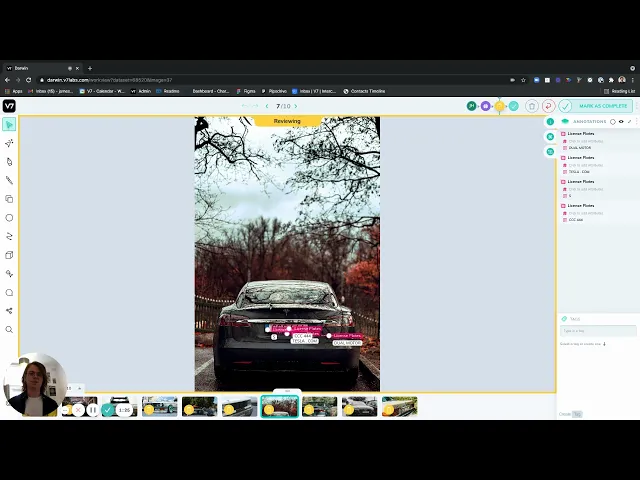

Using V7’s software, you can train object detection, instance segmentation and image classification models to spot defects and anomalies.

The more data you feed into the system, the easier it will be for the system to learn more about different types of defects.

This allows it to make more accurate predictions on the future quality of a material or product, thus allowing your company to reach an error-free production.

Naturally, there are still challenges involved. These include a lack of training data, poor quality images/videos, as well as initial setup costs.

Quality assurance

A report showed that multiple organisations are struggling with quality assurance.

And the problem is that quality-related costs are putting a huge dent into sales revenue (often as much as 20%, but sometimes as high as 40%).

These are staggering numbers. However, what we can deduce from this is that if companies were able to improve quality assurance, profits would soar.

Let’s take an example of what we mean. Fast-moving consumer goods companies (FMGC) have long had a mutual problem: That of printed labels that are of such a poor quality that, when the code is applied to a wet label, the whole production line is brought to a standstill.

Computer vision solutions like APRIL Eye are rectifying these issues, using image classification and object detection algorithms trained on super massive datasets to verify date and label codes at speeds of 1000+ packs per minute.

Challenges like font distortion, missing text and varying fonts are overcome, and the production line isn’t brought to a standstill.

Indeed, computer vision is playing a key role in the overall quality assurance processes in the manufacturing sector. Industries that are benefiting from its role in production process automation include electronics, automotive, general-purpose manufacturing and many, many more.

For instance, the automotive industry benefits from paint surface inspection, foundry engine block inspection and press shop inspection. Computer vision systems are able to spot cracks, dents, scratches and other anomalies.

Want to learn more? Check out 27+ Most Popular Computer Vision Applications and Use Cases

Meanwhile, in the electronics industry, the same systems can detect defective components, defective gluing and missing pieces, while in the general-purpose manufacturing industry, it can help with surface inspection, fabric inspection and packaging inspection.

Product assembly

Elon Musk has long been seen as a trailblazer—a Herculean man of science who’s constantly breaking new ground.

And the ground he breaks isn’t always as extravagant as sending rockets to Mars—it’s equally as grounded as improving Tesla’s production lines, which are now over 75% automated.

Why does this matter?

It matters because manufacturers—as part of the industry 4.0 evolution—are in general embracing automated product assembly processes.

Computer vision is used by multiple manufacturers to help improve their product assembly process. For example, using a computer vision inspection system to build 3D modelling designs, manufacturers are now able to streamline specific tasks that human workers have traditionally struggled with.

Computer vision also assists operators with Standard Operating Procedures when the operators have to switch products numerous times in one day. This allows for more efficient processes. Moreover, it provides the workers with instructions to help them complete each step correctly.

Managers are also informed each time there’s a malfunction or other type of problem that needs to be rectified ASAP. To help with this, CV-powered cameras are installed, which feed images into an AI algorithm, which in turn scans the images for faults. When an issue is flagged by the algorithm, the manager is instantly notified and can then take action.

In a similar vein, object detection and object tracking are used to help manufacturers spot anomalies on the assembly line. Such anomalies might include cracks, scratches and other defects.

And while robotic arms have been used in the product assembly process for a few years now, computer vision is able to improve their precision further by guiding and monitoring their arms.

Ultimately, improving product assembly processes via computer vision lowers the cost of production in the manufacturing industry by completing assembly processes with less error.

PPE detection

According to NIOSH, more than 2,000 work-related injuries occur each day in American workplaces, with 80% of people who were at the American Society of Safety Engineers conference claiming to have seen workers operating without safety equipment (PPE).

Moreover, 30% said they had seen workers operating without safety equipment on multiple occasions.

Naturally, those 2,000 work-related injuries can be massively reduced and even prevented altogether through the complete use of PPE.

The main problem here is that it’s almost impossible for a company to monitor their workers all day long for the use of PPE.

This is where AI comes in.

Computer vision-powered cameras are able to detect the likes of safety glasses, joint protectors, gloves, ear protectors, welding masks and goggles, high-vis jackets, face masks and hard hats. They can spot whenever a worker is wearing any or all of the above—and whenever a worker who should be wearing any or all of the above has omitted an item or two (or three).

At this point, we have to ask how effective computer vision is at spotting PPE.

A study provides us with some answers, describing as it does real-time computer vision systems implemented at a construction site that is trained for PPE and posture detection.

To construct the system, researchers amassed a huge dataset of 90+ videos using cameras installed onsite, before annotating the data and training an object detection model.

The result was high-level stuff.

Recall and identification ratios were over 95% and 83%, while the classification of postures in both validation and model testing were 64% and 72%.

Looking to get hands on experience with labeling data? Check out What is Data Labeling and How to Do It Efficiently [Tutorial].

How is computer vision able to detect PPE?

It collects thousands of images from video recordings of multiple construction sites—as many as 2,509 images according to one paper—before using deep learning to train the model.

Deep learning is essential because without it, training object detection algorithms to process huge swathes of data is impossible. And without these huge swathes of data, the computer vision system isn’t able to correctly differentiate objects, as well as contextualise them.

The model used in the paper went on to achieve a 0.96 F1 score, while recall and precision rate averaged out at 96%.

Ultimately, using computer vision for PPE detection in the manufacturing industry helps to reduce workplace accidents while saving a company money, and it also lowers insurance premiums and it can promote a better working culture.

Moreover, the process for adding a computer system for the purpose of PPE detection is far from challenging. Companies can use CCTV, surveillance cameras and others to collect the data, before using an image annotation tool to label PPE equipment.

Then, the object detection model can be trained and applied to the company’s computer vision system so that PPE is detected in real time.

Reading text and barcodes

Optical character recognition detects and reads images—printed, pre-printed and stamped—via computer vision.

OCR is able to easily decipher a text—including all its different languages and fonts and styles—and turn it into something meaningful, while at the same time digitalising it. This allows business faster access to actionable data.

How does this help the manufacturing industry in particular?

Organisations typically experience a huge influx of incoming documents. OCR is able to help ease the burden, speed up operations and reduce human error by extracting data from all incoming documents before turning the data into something that is business-ready, and which can be indexed according to their titles, contents and keywords, before being actioned on.

The usual steps needed for manual form processing are either reduced or eliminated altogether, which at the same time minimises—or altogether eradicates—human error. This is because OCR is able to identify data directly from scanned/printed images, thereby reducing data entry time.

ORC can carry out a number of tasks:

Expiration date verification

Label placement

Label verification

Shipping data management

Customer credits

Expedited product returns

Lot code and batch verification

For example, you can use V7’s public Text Scanner model for your OCR tasks:

Our Text Scanner works on all texts, including text that is:

Rotated

Diagonally slanted

Curved

Extremely low resolution

In any language

It also works on the following alphabets:

Latin

Cyrillic

Chinese simplified (Mandarin)

Japanese

Like all computer vision techniques, OCR isn’t yet perfect.

If there are poor lighting conditions or blurring to the text/image, OCR’s capabilities could be lessened. However, there are already solutions in place that ensure OCR can overcome its challenges, while its deep learning processes ensure the system is able to achieve familiarity with printed texts super fast.

It’s also worth mentioning that numerous manufacturing companies have already adopted OCR.

Indeed, the OCR market is expanding at a huge rate. According to reports, its market size was valued at $7,460,000,000 in 2020, and is expected to achieve an annual growth rate of 16.7% within 7 years from now (2021).

Inventory Management

Locating stock in a large warehouse isn’t easy—in fact, it’s super challenging.

Indeed, monitoring warehouse inventory on the whole is tricky to do with accuracy and efficiency. The aim is to monitor it with as much accuracy as possible, while eliminating allor, at least, most errors.

But with so many tasks to complete, including inventory audits, tagging and labeling, avoiding the kind of errors that can have a detrimental effect on the whole supply chain is far from easy.

Unless businesses adopt computer vision systems, that is.

Make sure to read 9 Essential Features for a Bounding Box Annotation Tool before choosing a platform for training object detection models.

Computer vision is now used by manufacturing firms to:

Count stock

Take care of warehouse inventory status

Instantly raise awareness to the manager(s) whenever material that is needed for manufacturing purposes is running out

Traditionally, teams would track their inventory by walking around the warehouse with a pen and taking notes. This was time-consuming and subject to human error.

Worse still, it means that tasks which could in theory be automated were being carried out by staff who could serve a more productive purpose elsewhere.

Computer vision automates the inventory management process by using techniques like object detection to track stock in real-time. It can locate empty containers, and ensure that restocking is fully optimised.

For instance, such a system can use an AI algorithm to recognise supplies and how many are available (or, indeed, not available).

By monitoring the supplies and materials, as well as their movement through the warehouse, computer vision cameras can quickly identify when there’s an empty space on a shelf, as well as alert managers each time stock needs to be replenished ASAP.

Want to understand object detection better? Check out YOLO: Real-Time Object Detection Explained

Computer vision is also replacing the spreadsheets and clipboards that have been so intrinsic to inventory counts over the years with a platform that now displays automatically the information required in real time.

And because manufacturing companies have access to real time updates to their inventory, they will save huge swathes of time searching for products/supplies/materials.

Then there is autonomous technology (drones), which the likes of Amazon and PINC have already adopted for inventory management. By adopting them, Amazon was able to slash their operating costs by 20%, which helped them to improve their operating margins.

PINC, meanwhile, combines their drones with computer vision systems, cloud computing, RFIC sensors and AI to track and monitor their warehouse assets.

What can drones do?

They can perform an inventory scan 100x faster than the average human worker. Even better, their inventory accurate rate is almost at 100%, while warehouse incidents and accidents are greatly reduced—or eliminated altogether.

Amazon has taken things further.

The eCommerce giant has also been working with AI-driven Kiva robots, which work on the factory floor, moving and stacking bins. These robots can also carry, transport and store merchandise that’s as heavy as 3,000 lbs.

The benefit of using robots to complete these tasks lies with the customers: Because warehouses are able to transfer more goods, they’re able to carry more inventory, which means customers will receive their orders faster.

Check out the 6 AI Applications That Are Shaping The Future of Retail.

Predictive Maintenance

How awesome would it be if you could detect a machine failure … before it happens? This would save so much money on repair costs.

Detecting a machine failure before it happens requires three things:

The correct data

The ability to analyse the data

The proper corrective action

With predictive maintenance, this is all now possible.

What is predictive maintenance?

It uses IoT (Internet of Things) in conjunction with machine learning to track machinery data, before collecting data points, finding signals—and making the necessary adjustments so that components and assets don’t break down.

Data points are time stamped and help to provide an arsenal of machine performance metrics. Manufacturers can now train deep learning models so that they can find any potential defects in equipment and relay this information in real-time so that preventative action can be taken.

Potential problems might include leakage detection and fault diagnosis.

Not just that, but such solutions let managers monitor the current machine status of all their systems. By tracking data in real time like this, they can imitate real-time responses, as well as quickly understand the forecasted state of damage.

Systems that use computer vision tech can also improve their asset planning and maintenance scheduling.

To use an example, data can tell a manager that if their team nudges their equipment’s run rate up so as to boost production volumes, it could result in significant damage. The system may also find that graphic sleeves on a bottle of pop are being stretched, and that therefore production methods need to be changed so that the manufacturer remains within spec.

To help with this, FANUC developed ZDT (Zero Down Time), a piece of software that gathers images from cameras, before sending them (and their accompanying metadata) to the cloud. After they’ve been processed, they can spot any potential issues that may appear.

All of this is important because data has shown that predictive maintenance tools are reducing downtime by as much as 50%, while at the same time boosting machine life by up to 40%.

Moreover, just a single minute of downtime in—to use an example—an automotive factory can take away $20,000 out of the profits on high-profit cars, trucks and vans.

The future of Artificial Intelligence in Manufacturing

Companies are already leveraging it to speed up their processes, improve safety, assist manual workers so that their skills can be used better elsewhere, and ultimately improve their bottom line.

The benefits of AI in manufacturing—which already include reduced costs and saved time—are set to continue, with companies able to spot problems before they arise, improve their product assembly line and use computer vision-based techniques to help scale their business.

Not started with computer vision yet?

The good news is that we’re still at the beginning—and there’s still time for you to introduce AI systems to your manufacturing processes.

V7 arms you with the tools needed to integrate computer vision into your existing applications, and the good news is that you don’t even need to be an expert.

Contact V7 today to start testing for free to see how it can fit into your manufacturing systems.

If you are interested to learn more about AI applications across other industries, check out: