AI implementation

7 min read

—

This guide breaks down what Hebbia does well, and where leading alternatives may better fit your workflow, so you can choose the platform aligned with your real bottleneck.

Imogen Jones

Content Writer

Hebbia has become one of the most talked-about “serious work” AI platforms, especially in financial workflows. That is in no small part because it treats complex document work like an analyst would: gather sources, reason step-by-step, and show receipts.

Its flagship product, Matrix, is built around the idea that you shouldn’t have to duct-tape prompts together or manually stitch together outputs from PDFs, slides, spreadsheets, and transcripts. Instead, Matrix orchestrates models (including vision for charts and tables) and keeps citations visible so a human can validate quickly.

If you’re here, you’re probably either:

paying for Hebbia and wondering what else is out there in 2026, or

evaluating it and want to compare the broader landscape (from “just use an LLM” to full enterprise search to agent platforms).

This guide starts with what Hebbia is and why it works, then an examination of leading alternatives, so you can be sure you're making the right choice for your workflow.

Chat with your files and knowledge hubs

Expert AI agents that understand your work

Get started today

What is Hebbia?

At a high level, Hebbia is an AI platform designed to help teams answer complex questions from large sets of private documents, reliably, with traceability.

The platform was founded in 2020 by George Sivulka, and is headquartered in New York City. It has raised over $160 million in venture capital since its founding

Hebbia started from the premise that traditional search (and even early “semantic search”) didn’t match how knowledge workers actually work, especially when the answer requires pulling facts across many sources and formats.

The core product, Matrix, reflects that philosophy. Instead of a chat interface that generates a single answer, Matrix organizes work into structured rows and columns, allowing users to extract information across many documents simultaneously. It performs multi-step reasoning, references source material, and surfaces citations inline so users can verify outputs.

OpenAI has published a case study describing how Hebbia uses OpenAI models inside Matrix and reports big gains vs. “out-of-the-box RAG” on a benchmark spanning legal + financial documents.

In short, Hebbia attempts to replicate how a highly capable analyst works, but at machine scale.

Hebbia Pricing 2026

Hebbia doesn't publicly list pricing on its website, and as of 2026, it continues to operate on an enterprise sales model.

Based on market positioning and enterprise AI benchmarks, Hebbia deployments are generally priced in the mid-to-high six-figure annual range for larger organizations, particularly in finance and law. Smaller pilots may begin at lower tiers, but Hebbia is not positioned as an SMB self-serve product.

Who uses Hebbia?

Hebbia is most often evaluated for:

Due diligence / data rooms (find risks, obligations, clauses, numbers across thousands of docs)

Contract analysis (compare clauses, extract key terms, flag inconsistencies)

Investment research (summarize filings, market research, transcripts; build comparisons)

High-stakes knowledge work where you need auditability and speed, not just “a plausible answer”

Hebbia Alternatives 2026

Here are some of the leading alternatives to consider if you are using Hebbia, or think a platform like it could be helpful in your stack.

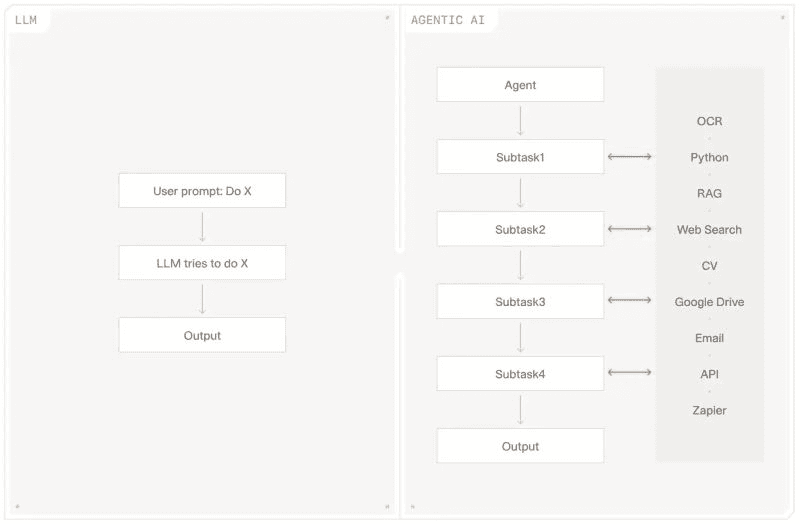

Option 1: The General LLM Stack (OpenAI / Claude / Gemini + RAG)

Before evaluating any dedicated platform, it’s worth acknowledging the obvious baseline: using frontier models directly.

Many teams in 2026 experiment with connecting models like GPT, Claude, or Gemini to their internal documents via retrieval-augmented generation (RAG). You index your corpus, retrieve relevant chunks, pass them to the model, and generate answers. For pilots and lightweight research tasks, this can work well. It’s fast to test and useful for summarization or basic Q&A.

The challenge appears when moving from demo to production.

As document volumes grow, retrieval quality degrades without careful tuning. Complex PDFs with tables or scans require specialized parsing. Multi-step reasoning across large corpora increases the risk of inconsistencies. Reproducibility, permissions, logging, and governance all require deliberate engineering effort.

Most importantly, while a general LLM stack typically answers questions, it doesn’t inherently automate workflows. Dedicated platforms and agentic AI systems go further by structuring multi-step processes, enforcing validation, generating standardized outputs, and integrating directly into operational pipelines.

For experimentation or simple conversational interactions, a general LLM stack is often sufficient. For reliable, repeatable document workflows at scale, or as a complete Hebbia replacement, purpose-built platforms usually provide a more complete solution.

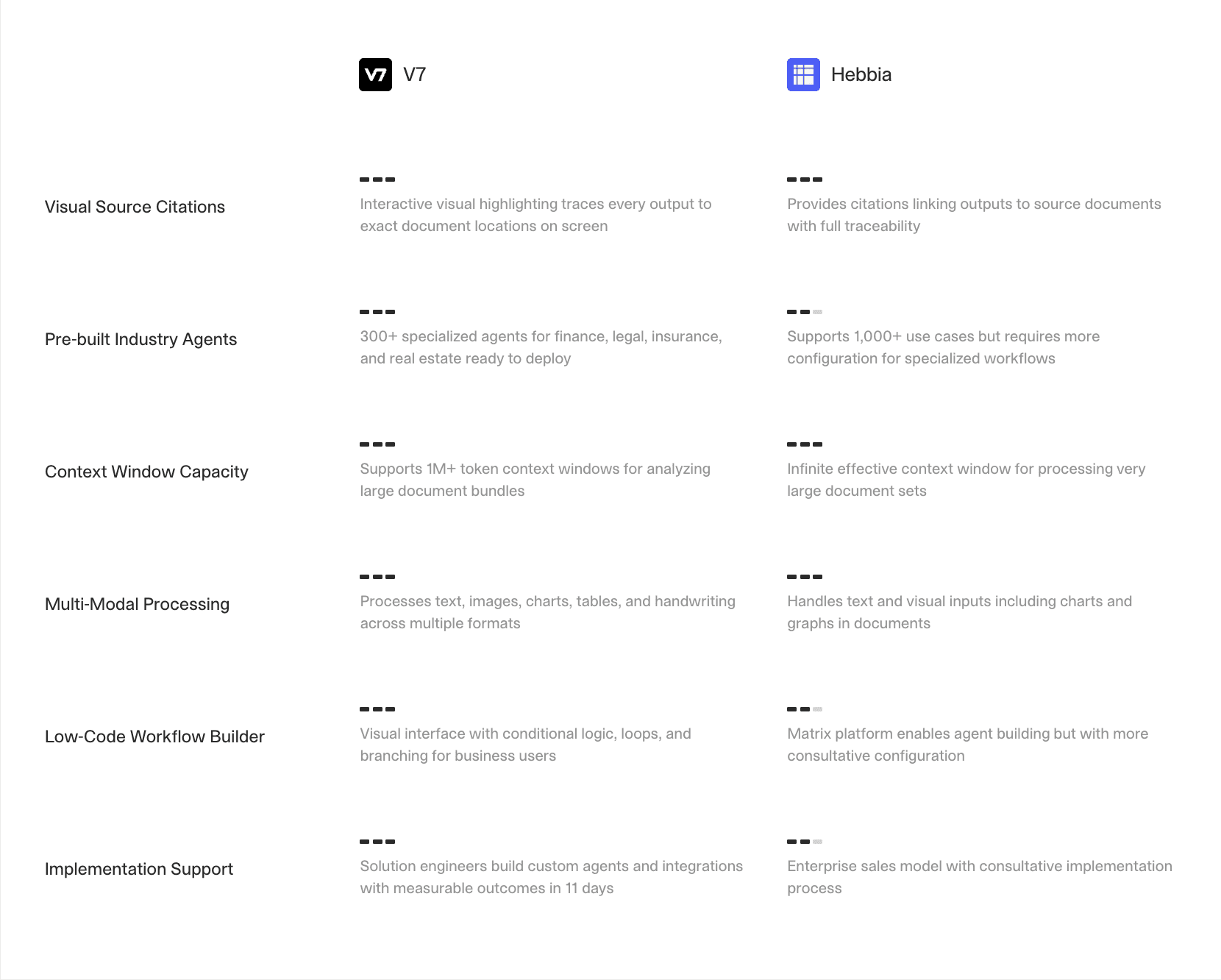

Option 2: V7 Go

Website: V7 Go

V7 Go is an AI agent platform designed for document-intensive workflows across finance, legal, insurance, and operations.

At its foundation is industry-leading multi-modal ingestion. V7 Go can understand and extract both structured and unstructured data from virtually any document format, from scanned PDFs to complex Excel models, dense tables to multilingual files. But ingestion is only the starting point.

Where V7 Go stands apart is in workflow orchestration.

Teams can:

Chain AI tasks into full workflows with logic, conditions, branching, and human review checkpoints. That means outputs don’t just inform decisions, they trigger actions.

Generate structured, production-ready outputs (JSON, CSV, formatted reports) that plug directly into CRMs, ERPs, data warehouses, or internal systems.

Traceability is a defining feature. Every extracted data point is visually linked to its original source using bounding boxes, creating a clear, defensible audit trail. If an agent pulls revenue from page 47 of a CIM, you can click directly to the highlighted section. This dramatically reduces hallucination risk and makes the platform well-suited to compliance-heavy environments like finance, legal, and insurance.

V7 Go also includes Knowledge Hubs, a kind of Virtual Data Room, which connect agents to internal playbooks, policies, and reference documents. Instead of retraining models, teams provide structured access to institutional knowledge. As those source documents evolve, the intelligence remains current.

This agentic design makes V7 Go especially strong when the goal extends beyond research to reliable workflow automation. If your priority is not only extracting insights but accelerating business processes, V7 Go’s agents can orchestrate the steps end-to-end.

Importantly, V7 Go is not purely aimed at finance or specific legal use cases. Its architecture is designed to accelerate and enhance workflows across the entire organization, including back-office operations.

Learn more in our detailed comparison, V7 vs. Hebbia.

Option 3: Glean

Website: Glean

Glean, founded in 2019 in California, is a leading enterprise “Work AI” platform. It focuses on company-wide search and knowledge discovery.

Unlike Hebbia, which is often deployed for bounded, document-heavy workflows (such as diligence or contract review), Glean aims to connect across enterprise systems (Google Drive, Slack, Jira, Confluence, and more) and provide a unified search and assistant experience.

If your organization struggles with internal knowledge fragmentation, Glean may be a more natural solution. It excels at helping employees find existing information across tools, respecting permissions and surfacing relevant context.

However, Glean is typically less specialized for structured cross-document extraction workflows. It prioritizes discoverability and enterprise search over analyst-grade comparative reasoning.

Organizations deciding between Hebbia and Glean should clarify whether their problem is “deep document analysis” or “company-wide knowledge access.”

Option 4: Coveo

Website: Coveo

Coveo and Hebbia are both AI-powered information retrieval platforms, but they cater to different primary needs. Coveo focuses on AI-driven search, personalization, and relevance for e-commerce and customer service.

Coveo’s strength lies in search performance tuning. The platform uses machine learning to rank results, personalize content, and continuously optimize relevance based on user behavior and analytics. For organizations that treat search as a product capability (something that directly impacts customer conversion, support efficiency, or employee productivity) Coveo provides significant flexibility and measurement tools.

Unlike Hebbia, Coveo is not primarily designed for structured diligence-style workflows or cross-document comparative reasoning. Instead, it excels at delivering fast, relevant results at scale across large, distributed content ecosystems.

The software can be integrated and use data from a number of enterprise software platforms

Option 5: Microsoft 365 Copilot and Copilot Search

Website: Copilot

Hands up if you work in finance? Hands up if you spend a lot of time in Microsoft Excel?

That's a lot of hands.

For organizations deeply embedded in the Microsoft ecosystem, Microsoft 365 Copilot can feel like a pragmatic alternative to Hebbia. Copilot Search operates within the Microsoft Graph, grounding responses in SharePoint, OneDrive, Teams, Outlook, and connected enterprise sources. Rather than introducing a new standalone platform, it layers AI across your existing productivity environment.

A key advantage is governance and deployment simplicity. Permissions already exist, IT control is centralized, and adoption can happen organically.

However, compared to Hebbia, Copilot serves a different primary purpose.

Hebbia is designed as a structured analyst workflow engine, organizing cross-document reasoning into traceable, repeatable analysis. Copilot, by contrast, is a general productivity assistant. It can retrive context across Microsoft tools, but it is not purpose-built for diligence-style extraction, structured comparative analysis, or large-scale data room interrogation.

In practice, organizations evaluating Copilot vs. Hebbia should ask:

Do we need deep, structured document analysis with explicit citations and repeatability?

Or do we primarily need better knowledge retrieval and AI assistance inside our existing productivity suite?

It’s also worth noting that user experiences with Copilot vary. Some teams report inconsistent results when handling highly complex documents or multi-step reasoning tasks at scale.

Chosing the Right Hebbia Alternative 2026

If your primary need is deep, structured reasoning across large document sets with clear citations, Hebbia is a strong option and a well-known name in finance and legal workflows.

That said, it’s one of several credible platforms in a fast-moving category. AI platforms are advancing rapidly, and new capabilities are emerging every quarter.

The best approach is to evaluate platforms against a real workflow (not a polished demo) and assess performance, traceability, integration depth, and total operational impact.

If you'd like to try V7 Go as an alternative to Hebbia, book a chat with our team to see how agentic document automation could fit into your workflow.

What is Hebbia primarily used for?

Hebbia is typically used by investment banks, private equity firms, and legal teams to analyze large document sets — such as CIMs, data rooms, contracts, and filings — by asking structured questions across multiple files at once.

+

Is Hebbia a full workflow automation platform?

Not exactly. Hebbia is powerful for extracting and comparing information across documents, but many firms still rely on separate tools for modeling, memo drafting, research, dashboards, and system integration. Teams seeking alternatives often want a platform that orchestrates end-to-end processes, not just document analysis.

+

Can general-purpose LLMs replace Hebbia?

Consumer LLM tools like ChatGPT or Claude can analyze documents and answer questions, but they often struggle with multi-document comparisons at scale, consistent structured outputs, and enterprise governance requirements. For exploratory work, they can be useful. For high-stakes financial workflows requiring repeatability and auditability, purpose-built AI orchestration platforms are typically more reliable.

+

What should finance teams look for in a Hebbia alternative?

In 2026, finance teams should assess whether a platform can process entire data rooms, reason over complex Excel models, generate structured and exportable outputs, provide clear citations, and integrate with existing systems. Equally important is the ability to run repeatable workflows aligned with a firm’s internal investment criteria or compliance standards.

+

Are Hebbia alternatives secure enough?

+